Clement Creusot, PhD

PhD Webpage Archive

This is my PhD webpage. I submitted my PhD thesis on automatic 3D face landmarking in December 2011. I passed my viva in March 2012. This PhD project at the University of York, Computer Science, has been supervised by Dr. Nick Pears and Prof. Jim Austin. My work was to evaluate new techniques for automatic face landmarking and face recognition. I mainly focus on machine-learning-based 3D-shape-analysis techniques for facial landmarking. Landmarking using only 3D data is a difficult problem, especially in real-world non-cooperative cases where facial features has to be detected despite the presence of occlusions, pose variations, and spurious data in the captured 3D scans.

This page provides information about this 3 years research project with links to publications and downloadable source code. For information beyond the scope of this page, please contact me directly by email.

- 3D Shape Analysis

- Discrete Differential Geometry

- Local-Shape Descriptors

- Human-Face Analysis

- Automatic 3D Face Landmarking

- Feature-based Face Recognition

- Correspondence Problem

- 3D Surface Registration

- Graph and Hypergraph Matching

The first expected outcome for my research is a face recognition technique more robust to face orientation than the current state-of-the-art.

The applications for that kind of research are mainly Security (ex: 3D CCTV) and the Human-Machine interactions (ex: vision in robotics).

Automatic landmark detection techniques can also help in all domains where face labelling is needed on big database, from computer vision to psychology.

Automatic landmark detection can be used in face recognition for two purposes. It can help to find correspondences between two faces before matching and it can help to extract discriminative information about the face being treated. The information can be featural and be supported by the neighbourhood of each landmark (e.g. local shape around the landmarks) or configural and be linked to the relationships between them (e.g. distances between landmarks).

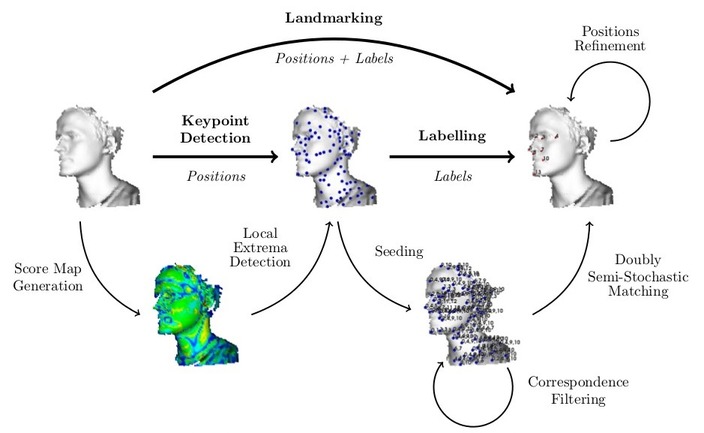

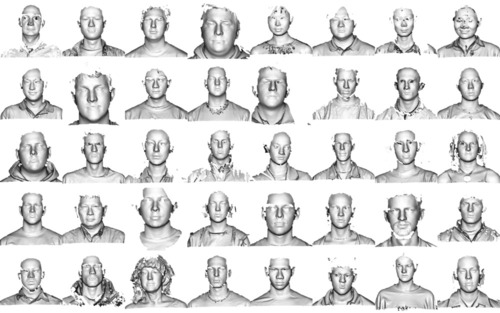

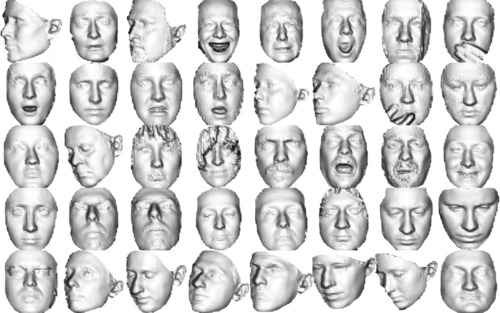

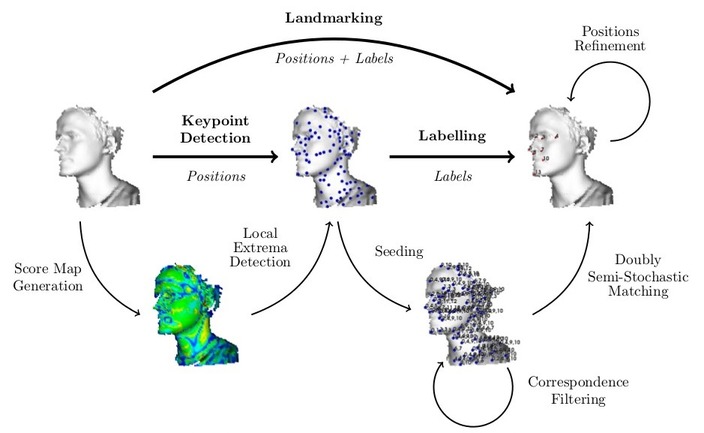

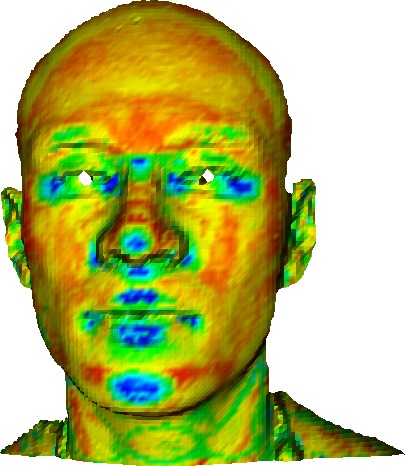

For the landmark detection, I combine sets of simple fields, for example several types of curvature and volumetric information as well as crestline and isolines on the surface to detect points. The repeatability of those landmark are tested using manually landmarked datasets like the Face Recognition Grand Challenge database (FRGC) and the Bosphorus database.

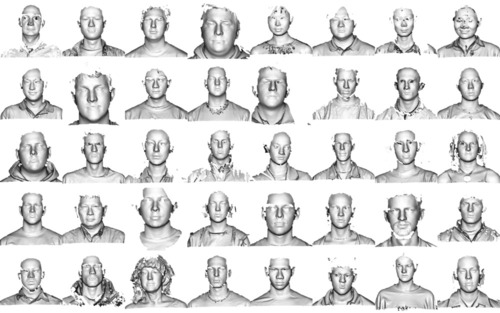

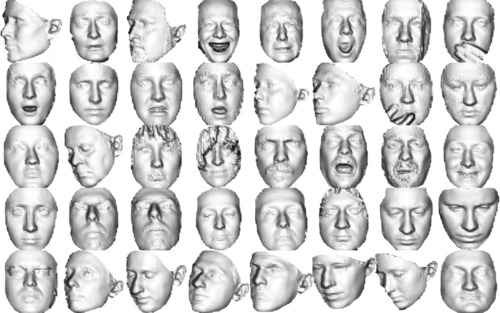

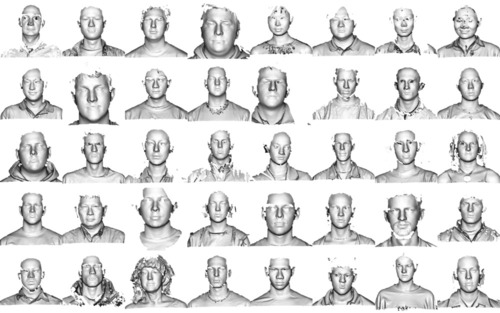

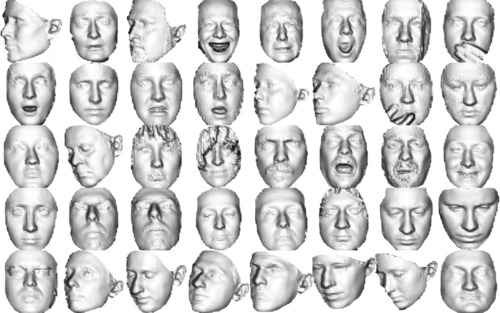

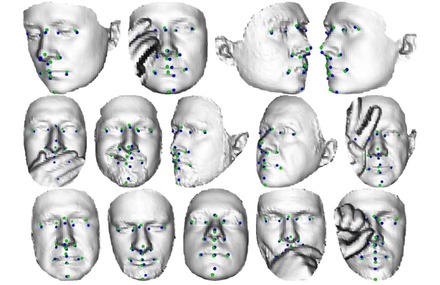

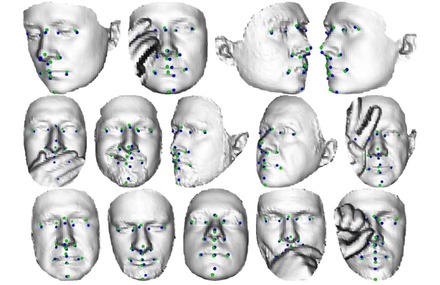

Fig: Example of meshes from the FRGC (left) and Bosphorus (right) databases.

|

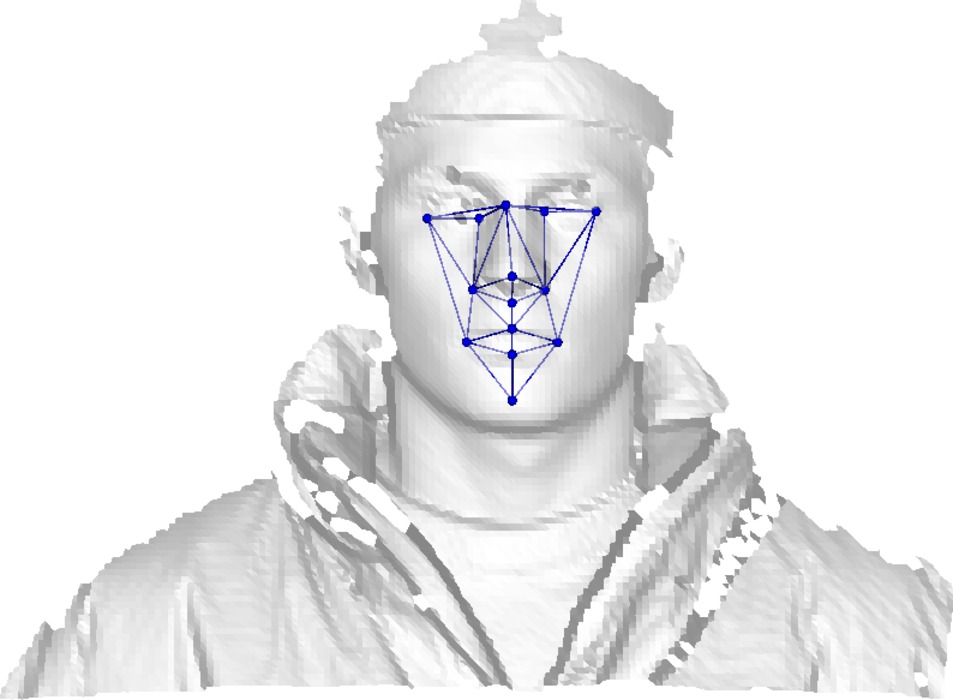

For both landmark labelling and face matching, we construct hypergraphs upon the detected landmarks and match them on models using hypergraph matching techniques. The hypergraph structure in our code allows us to store and match relational information of any degree. In the case of complete hypergraph (all-to-all connectivity) we usually don't go above degree 3 for speed concerns.

Fig: Global Process Workflow

|

Fig: Detailed Landmarking Workflow

|

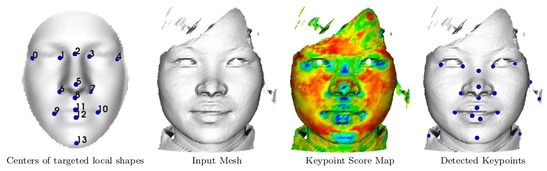

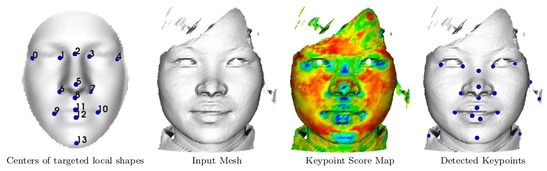

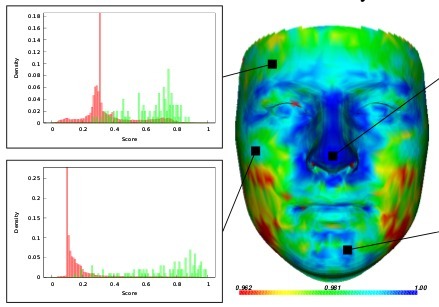

Our keypoint detection system evaluate for every vertex a score of being a point of interest by using a pre-computed statistical dictionary of local shapes. The correlation between the input vertices and the learnt features are computed using a large number of local shape descriptors (mainly based of discrete-differential-geometric properties) of local area patch around the vertex. The number and nature of the local descriptors, as well as the size of the neighborhoods on which they are computed and the way they are combined can be optimized using basic matching learning techniques such as LDA (linear discriminant analysis) or Adaboost (adaptative boosting).

Fig: Example of keypoint detection using a dictionary of 14 local shapes.

|

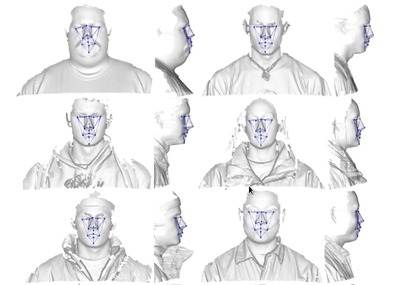

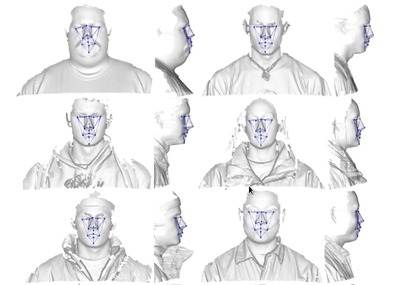

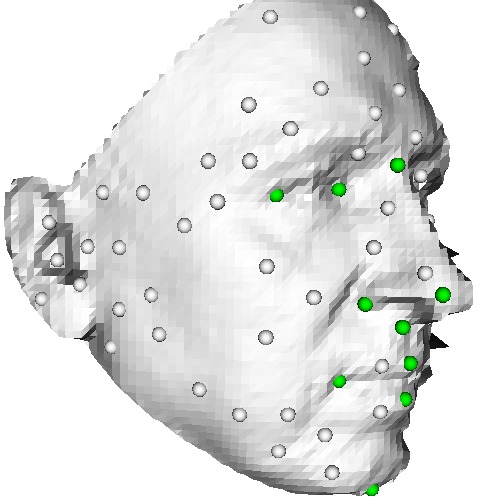

Here are examples of landmarking results obtained in October 2011 on the two databases using our keypoint-detection system coupled with a RANSAC geometric-registration technique.

Fig: Example of landmarking results for the FRGC (left) and Bosphorus (right) databases. In the right picture, The green points represent the ground truth, the blue points our landmarks.

|

For more details, please refer to the corresponding publications.

A Machine-learning Approach to Keypoint Detection and Landmarking on 3D Meshes

Clement Creusot, Nick Pears, and Jim Austin

Special Issue on 3D Imaging, Processing and Modeling Techniques - International Journal of Computer Vision (IJCV), 2013

- Paper [pdf, 5.1MB]

- Supplemental materials

- Springer Link: 10.1007/s11263-012-0605-9

-

Abstract

We address the problem of automatically detecting a sparse set of 3D mesh vertices, likely to be good candidates for determining correspondences, even on soft organic objects. We focus on 3D face scans, on which single local shape descriptor responses are known to be weak, sparse or noisy. Our machine-learning approach consists of computing feature vectors containing D different local surface descriptors. These vectors are normalized with respect to the learned distribution of those descriptors for some given target shape (landmark) of interest. Then, an optimal function of this vector is extracted that best separates this particular target shape from its surrounding region within the set of training data. We investigate two alternatives for this optimal function: a linear method, namely Linear Discriminant Analysis, and a non-linear method, namely AdaBoost. We evaluate our approach by landmarking 3D face scans in the FRGC v2 and Bosphorus 3D face datasets. Our system achieves state-of-the-art performance while being highly generic.

-

Citations

- Bibtex File [bib]

-

Plain text

Creusot, C.; Pears, N.; Austin, J.; "A Machine-Learning Approach to Keypoint Detection and Landmarking on 3D Meshes", International Journal of Computer Vision, pp.1-34, 2013, doi:10.1007/s11263-012-0605-9

-

Bibtex entry

@article{Creusot2013a,

year={2013},

issn={0920-5691},

journal={International Journal of Computer Vision},

doi={10.1007/s11263-012-0605-9},

title={A Machine-Learning Approach to Keypoint Detection and Landmarking on 3D Meshes},

url={http://dx.doi.org/10.1007/s11263-012-0605-9},

author={Creusot, Clement and Pears, Nick and Austin, Jim},

pages={1-34},

}

3D Landmark Model Discovery from a Registered Set of Organic Shapes

Clement Creusot, Nick Pears, and Jim Austin

Point Cloud Processing (PCP) Workshop at the Computer Vision and Pattern Recognition Conference (CVPR) 2012, Providence, Rhode Island.

- Paper [pdf, 4.9 MB]

- Slides [pdf, 5.7 MB]

- IEEE Link: 10.1109/CVPRW.2012.6238915

-

Abstract

We present a machine learning framework that automatically generates a model set

of landmarks for some class of registered 3D objects: here we use human faces.

The aim is to replace heuristically-designed landmark models by something that

is learned from training data. The value of this automatically generated model

is an expected improvement in robustness and precision of learning-based

3D landmarking systems. Simultaneously, our framework

outputs optimal detectors, derived from a prescribed pool

of surface descriptors, for each landmark in the model.

The model and detectors can then be used as key components

of a landmark-localization system for the set of meshes belonging

to that object class. Automatic models have some

intrinsic advantages; for example, the fact that repetitive

shapes are automatically detected and that local surface

shapes are ordered by their degree of saliency in a quantitative way.

We compare our automatically generated face landmark model with a

manually designed model, employed in existing literature.

-

Citations

- Bibtex File [bib]

-

Plain text

Creusot, C.; Pears, N.; Austin, J.; , "3D landmark model discovery from a registered set of organic shapes," Computer Vision and Pattern Recognition Workshops (CVPRW), 2012 IEEE Computer Society Conference on, pp.57-64, June 2012, doi: 10.1109/CVPRW.2012.6238915

-

Bibtex entry

@inproceedings{Creusot2012,

author = {Creusot, Clement and Pears, Nick and Austin, Jim},

booktitle={Computer Vision and Pattern Recognition Workshops (CVPRW), 2012 IEEE Computer Society Conference on},

title={3D landmark model discovery from a registered set of organic shapes},

year={2012},

month={june},

pages={57 -64},

doi={10.1109/CVPRW.2012.6238915},

ISSN={2160-7508},

}

Automatic Keypoint Detection on 3D Faces Using a Dictionary of Local Shapes

Clement Creusot, Nick Pears, and Jim Austin

3DIMPVT 2011, pp. 204-211, Hangzhou, China.

- Paper [pdf, 4.2 MB]

- Slides [pdf, 3.4 MB]

- IEEE Link: 10.1109/3DIMPVT.2011.33

-

Abstract

Keypoints on 3D surfaces are points that can be

extracted repeatably over a wide range of 3D imaging conditions.

They are used in many 3D shape processing applications;

for example, to establish a set of initial correspondences across

a pair of surfaces to be matched. Typically, keypoints are

extracted using extremal values of a function over the 3D

surface, such as the descriptor map for Gaussian curvature.

That approach works well for salient points, such as the nose-tip,

but can not be used with other less pronounced local

shapes. In this paper, we present an automatic method to detect

keypoints on 3D faces, where these keypoints are locally similar

to a set of previously learnt shapes, constituting a 'local shape

dictionary'. The local shapes are learnt at a set of 14 manually-placed

landmark positions on the human face. Local shapes are

characterised by a set of 10 shape descriptors computed over

a range of scales. For each landmark, the proportion of face

meshes that have an associated keypoint detection is used as a

performance indicator. Repeatability of the extracted keypoints

is measured across the FRGC v2 database.

-

Citations

- Bibtex File [bib]

-

Plain text

Creusot, C., Pears, N., and Austin, J. "Automatic keypoint

detection on 3D faces using a dictionary of local shapes". In 3D Imaging, Modeling, Pro-

cessing, Visualization and Transmission (3DIMPVT), 2011 International Conference on,

pages 204-211, Hangzhou, China, May 2011.

-

Bibtex entry

@inproceedings{Creusot2011,

author = {Creusot, Clement and Pears, Nick and Austin, Jim},

title = {Automatic Keypoint Detection on 3D Faces Using a Dictionary of Local Shapes},

booktitle = {3D Imaging, Modeling, Processing, Visualization and Transmission (3DIMPVT), 2011 International Conference on},

year = {2011},

pages = {204-211},

month = {may},

url = {http://dx.doi.org/10.1109/3DIMPVT.2011.33},

doi = {10.1109/3DIMPVT.2011.33},

keywords = {3D face detection;3D imaging condition;3D shape processing application;Gaussian curvature;

keypoint extraction;local shape dictionary;face recognition;feature extraction;object detection;},

}

3D face landmark labelling

Clement Creusot, Nick Pears, and Jim Austin

In Proc. ACM Workshop on 3D Object Retrieval, pp. 27-32, Firenze, Italy, Oct. 2010.

- Win the SAIC UK Biometric Research Competition -

Press release 1

Press release 2

- Paper [pdf, 459 KB]

- Slides [pdf, 1.3 MB]

- ACM Link: 10.1145/1877808.1877815

-

Abstract

Most 3D face processing systems require feature detection

and localisation, for example to crop, register, analyse or

recognise faces. The three features often used in the literature

are the tip of the nose, and the two inner corner of

the eyes. Failure to localise these landmarks can cause the

system to fail and they become very difficult to detect under

large pose variation or when occlusion is present. In this

paper, we present a proof-of-concept for a face labelling system,

capable of overcoming this problem, as a larger number

of landmarks are employed. A set of points containing hand-placed

landmarks is used as input data. The aim here is to

retrieve the landmark's labels when some part of the face is

missing. By using graph matching techniques to reduce the

number of candidates, and translation and unit-quaternion

clustering to determine a final correspondence, we evaluate

the accuracy at which landmarks can be retrieved under

changes in expression, orientation and in the presence of occlusions.

-

Citations

- Bibtex File [bib]

-

Plain text

Clement Creusot, Nick Pears, and Jim Austin. "3D face landmark labelling". In Proceedings of the 1st ACM Workshop on 3D Object Retrieval (ACM 3DOR'10), pages 27-32, Firenze, Italy, October 2010.

-

Bibtex entry

@inproceedings{Creusot2010,

author = {Creusot, Clement and Pears, Nick and Austin, Jim},

title = {{3D} face landmark labelling},

booktitle = {Proceedings of the ACM workshop on 3D object retrieval},

series = {3DOR '10},

year = {2010},

isbn = {978-1-4503-0160-2},

location = {Firenze, Italy},

pages = {27--32},

numpages = {6},

url = {http://doi.acm.org/10.1145/1877808.1877815},

doi = {http://doi.acm.org/10.1145/1877808.1877815},

acmid = {1877815},

publisher = {ACM},

address = {Firenze, Italy},

keywords = {3D face, graph matching, labelling, registration},

}

All the scripts and applications provided here have only been tested on Linux (Ubuntu and Linux Mint).

If you try them on other operating systems, please keep me informed of any problem/fix you found related to interoperability.

All the programs provided here are under GPL v3, except if specified otherwise in the headers.

Where is the interesting stuff?

In order to comply with the university regulation, I can not provide the sources of program that might be sensitive in term of intellectual property. This applies to most of my C++ code.

However if you have a request about a specific program I have developed (feature detector, hypergraph matcher, ...) please contact the head of the ACA group or myself for more information.

- 3D shape analysis:

- Simple stand-alone scripts:

- Non stand-alone converters:

- Landmark Related Material:

-

Readers (3)

-

ReadLdmk.py - Python Reader for .ldmk files

-

ReadLdmk.cxx - C++ Reader for .ldmk files

-

ReadLdmk.m - Octave/Matlab Reader for .ldmk files

- FRGC Related Material:

-

Mean faces - Using mean depth map of registered model sets (low resolution) (5)

-

Mean

Download .obj

Mean face generated from the registered faces of all the FRGC v2 dataset.

|

| Fig: Capture of the mesh |

-

Mean White Female

Download .obj

Mean face generated from the registered faces of all white females in the FRGC v2 dataset.

|

| Fig: Capture of the mesh |

-

Mean White Male

Download .obj

Mean face generated from the registered faces of all white males in the FRGC v2 dataset.

|

| Fig: Capture of the mesh |

-

Mean Asian Female

Download .obj

Mean face generated from the registered faces of all asian female in the FRGC v2 dataset.

|

| Fig: Capture of the mesh |

-

Mean Asian Male

Download .obj

Mean face generated from the registered faces of all asian male in the FRGC v2 dataset.

|

| Fig: Capture of the mesh |

- Videos:

This research project has been partly supported by the European Union FP6 Marie Curie Actions MRTN-CT-2005-019564 "EVAN"